The OpenHands Index: 3 Months Out

Written by

OpenHands Team

Published on

Teams are starting to use AI agents for real engineering work, but that usage breaks down quickly at scale. There is no centralized control over what agents can access or how they behave, which makes them difficult to approve for production use. Workflows are fragmented across tools and scripts, with little ability to reuse or standardize how work gets done. Teams also lack visibility into what agents are actually doing. There is no reliable audit trail, no clear understanding of cost, and no way to improve performance over time.

As a result, most organizations get stuck. Agents show promise, but they are too risky, too opaque, and too inconsistent to scale.

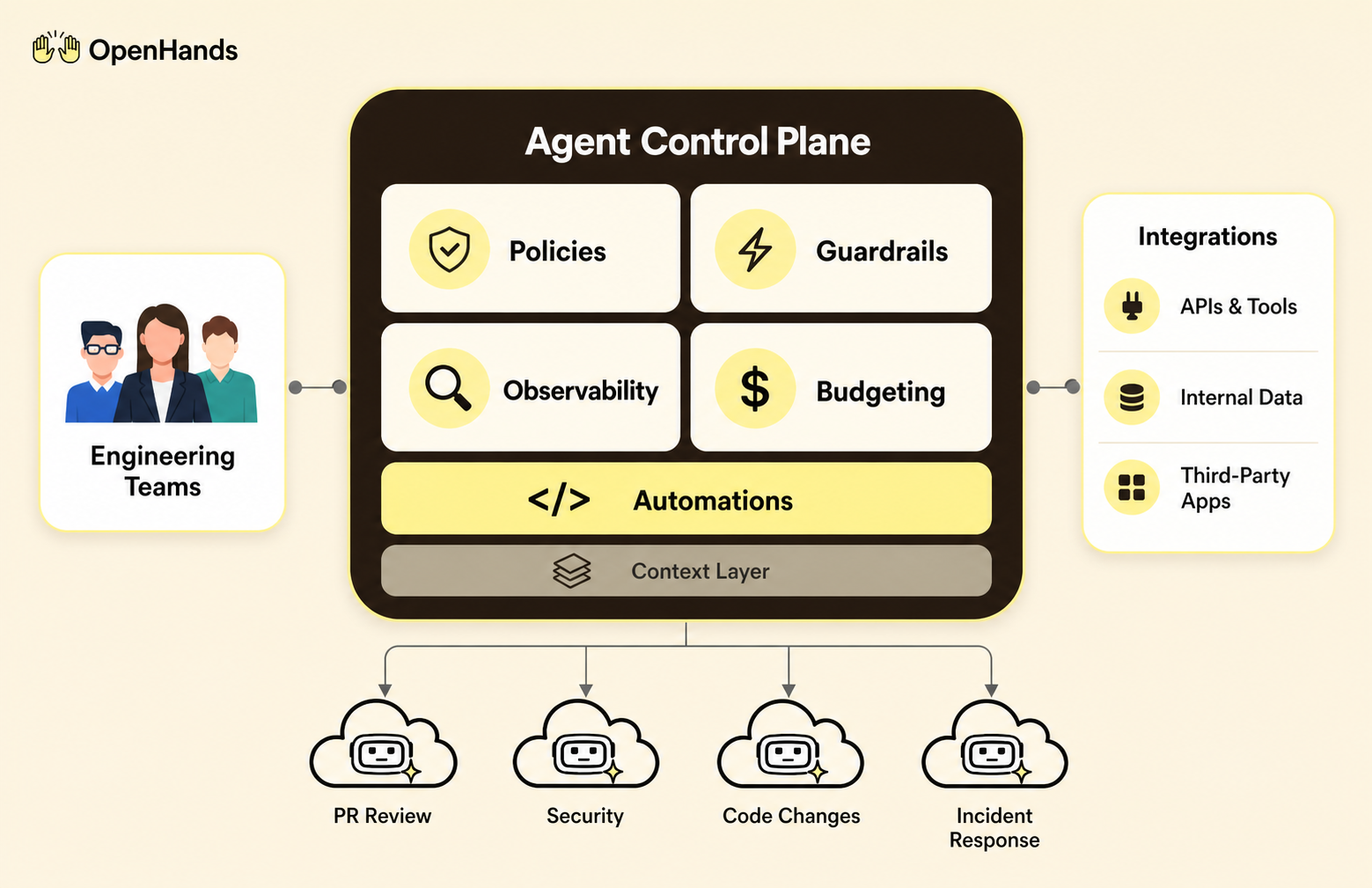

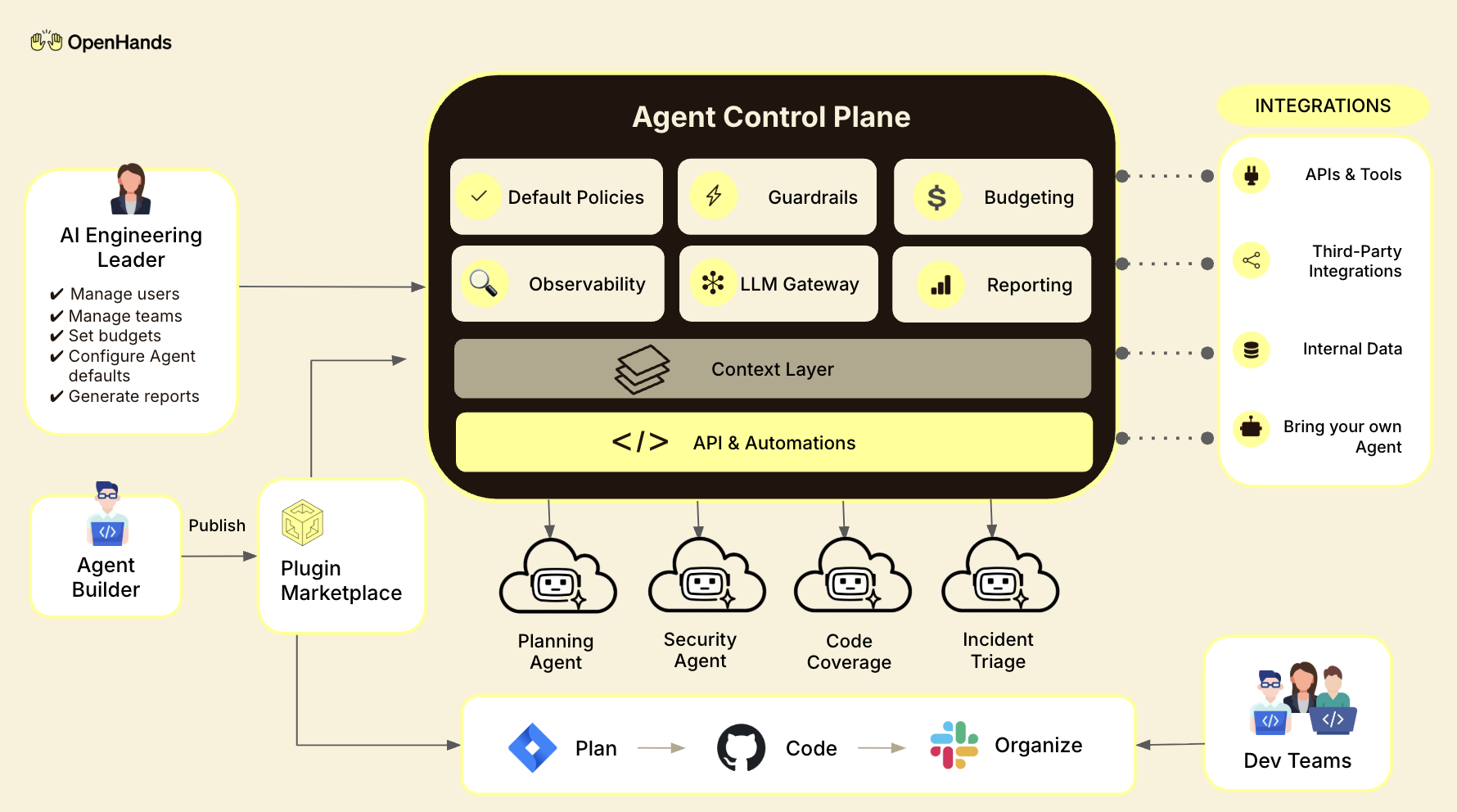

OpenHands Enterprise provides a fully self-hosted, on-premise system for running AI agents across an organization, with the infrastructure required to control, observe, and scale that work. At the center is the Agent Control Plane, which manages how agents operate across workflows, repositories, and teams. It enforces access policies, tracks usage and cost, and provides a complete record of agent activity.

Running agents as a system requires more than control. It requires a consistent way to define and execute work across that system.

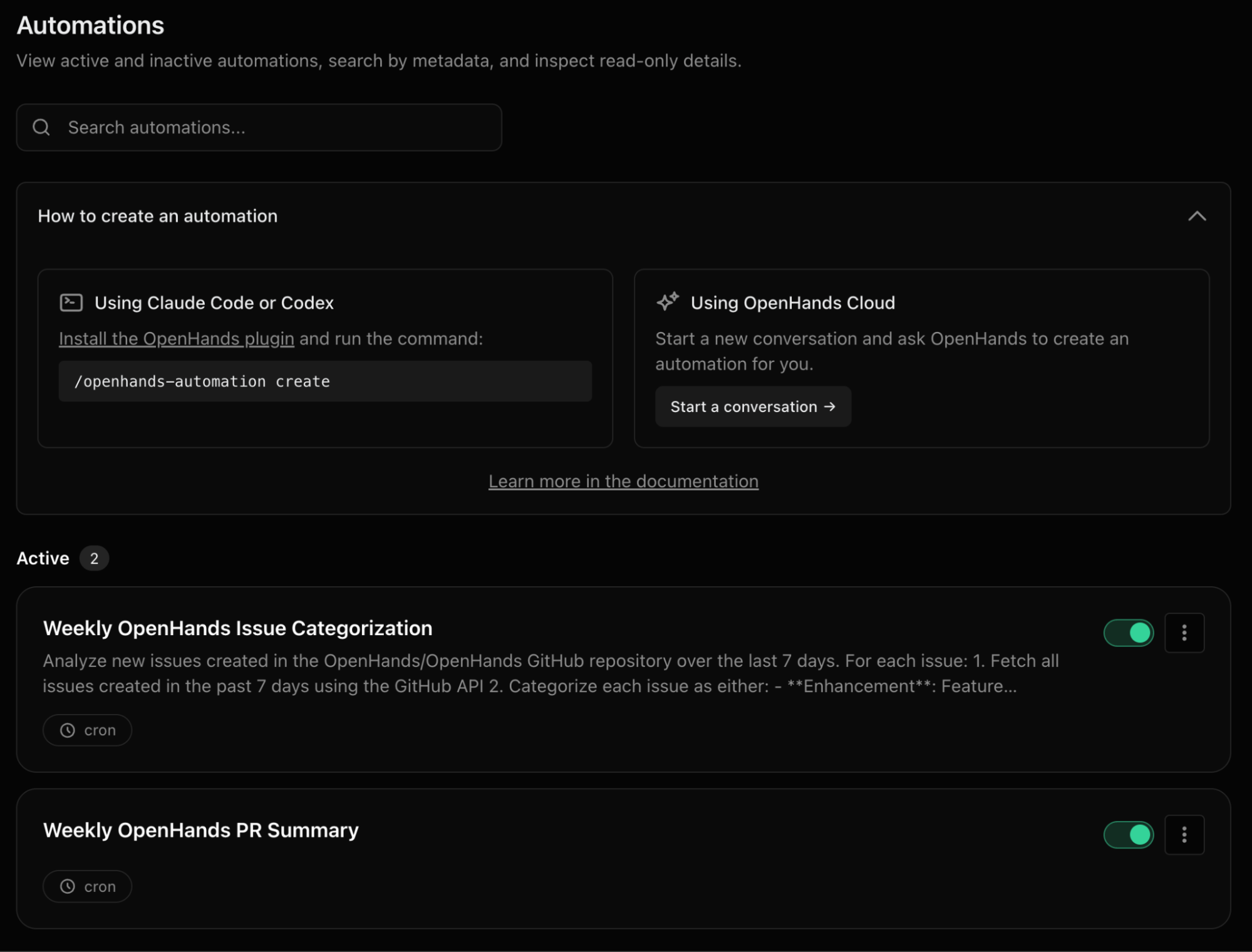

Today, we are excited to share that OpenHands Automations is now available in OpenHands Enterprise. Automations are how teams move from one-off agent usage to repeatable, system-driven workflows.

Instead of running agents manually, teams can define workflows once and run them on a schedule or trigger them based on real events across their systems. These workflows can be reused across repositories and teams, turning what was previously fragmented agent usage into a shared system for execution.

This is not limited to a single use case. It is a pattern that applies across common engineering workflows. Use cases include:

This shift is not about incremental productivity. It changes how engineering work gets done at the organizational level.

For platform, DevOps, and infrastructure leaders responsible for what runs in production, this creates a path to safely operationalize agents. Instead of one-off experiments scattered across teams, agent workflows become defined, repeatable systems that can be deployed, monitored, and improved over time. You increase output without adding headcount by moving repetitive work into systems that run consistently across the organization.

For security and compliance teams, this introduces the controls required to approve agent usage in production. Access is scoped, activity is auditable, and execution is contained within defined boundaries, making agent usage in production enforceable and accountable.

For engineering leadership and the business, this creates a more predictable model for execution. Work is no longer fragmented across tools and teams. It becomes measurable, governed, and aligned to outcomes, allowing organizations to scale automation without introducing operational risk.

The biggest change is not what agents can do. It is how developers use them. Instead of building and running isolated automations, teams define workflows once and run them across systems.

A task like code review or vulnerability remediation no longer needs to be handled manually across repositories. It becomes a repeatable workflow that runs consistently, either on a schedule or in response to events. Developers shift from executing work to defining how it should be done and reviewing the results. Over time, teams refine these workflows, improving performance without starting from scratch.

The result is a move from fragmented automation to a coordinated system for executing engineering work.

Running a single agent is a local problem. Running hundreds across an organization is a coordination problem. The Agent Control Plane is the layer that solves that. It provides centralized control over how agents operate across repositories, teams, and environments, ensuring that automation can scale without losing visibility or control.

At a technical level, it introduces a few core capabilities:

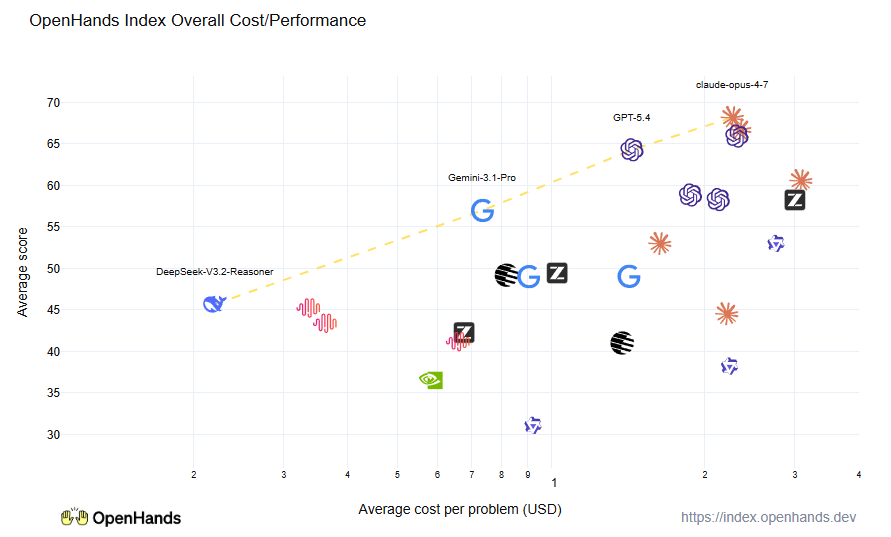

To support this, OpenHands introduced the OpenHands Index, a benchmark and leaderboard that evaluates how AI agents perform across real software engineering tasks. It provides a standardized way to compare models based on ability, cost, and runtime, helping teams choose the right model for each workflow and continuously improve how agents operate.

Together, these capabilities turn agent usage from something ad hoc into a system that can be managed, measured, and improved over time. Teams can scale automation while maintaining control, security, and accountability.

You do not need to adopt everything at once. Most teams start small and expand over time.

OpenHands is an open-source platform for building and running autonomous AI coding agents, with the control layer needed to run them safely at scale. The mission is to make agent-based software development accessible, transparent, and controllable by default. That starts in the open. The core framework is open source, giving developers and platform teams full visibility into how agents execute work and interact with their systems. The project has over 72,000 GitHub stars, millions of downloads, and contributions from hundreds of developers. It is used by engineers at large enterprises and fast-growing startups alike. The long-term vision is to become the full stack AI coding agent platform for software engineering. Not just helping developers write code, but running meaningful parts of the software lifecycle.

Insights and updates from the OpenHands team

Sign up for our newsletter for updates, events, and community insights.

OpenHands is the foundation for secure, transparent, model-agnostic coding agents - empowering every software team to build faster with full control.